Why Documentation Always Loses to Reality

Why documentation always loses to reality, how AI makes the gap unsurvivable, and what comes after the artifact.

It happens like this. You hit an error and need an answer. You go to the wiki first, search for the right page, find a stub from 2022 that doesn't quite match. You move to Slack, scrolling old threads from people who've since left the company. You open the codebase hoping to infer the answer from the source. Eventually you give up and ask in #help. Hours have passed.

This is the universal experience of working in a complex system, and it has been for as long as software has had documentation. Any single doc might be perfectly fine. The whole stack of documentation, taken together, is somehow less reliable than asking a senior engineer in Slack. When that's easier than using the documentation, the documentation has already lost.

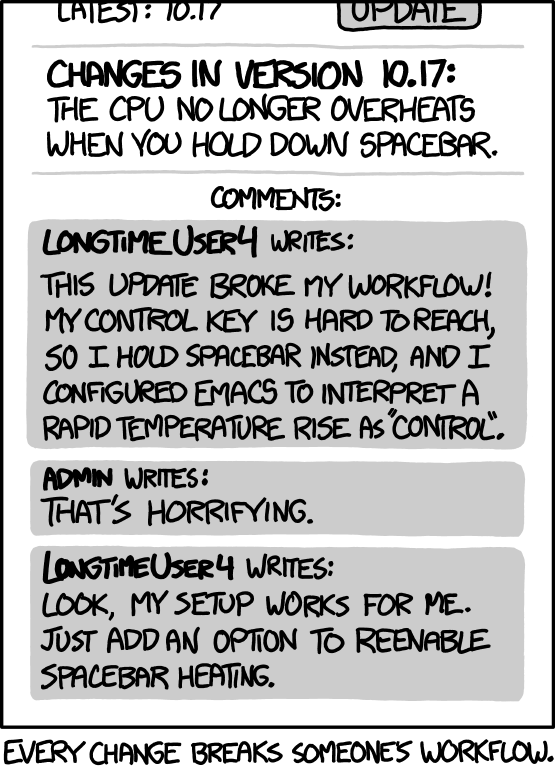

The standard explanation is a discipline problem. People don't update the docs. Teams don't prioritize it. Leadership doesn't enforce it. That story has been told for decades, and the cure has been the same. Write more, write better, write earlier, write together, write to the standard. None of it changed the outcome.

So maybe the diagnosis is wrong.

The failure is structural

Try harder hasn't worked. We have tried writing in wikis, then in Confluence, then in Notion. We have tried docs-as-code, version control for documentation, quarterly review cycles, doc owners with explicit accountability. We have tried hiring technical writers. We have tried mandating updates as part of definition-of-done. Each new tool promised a structural improvement and delivered a discipline improvement. Each one decayed on the same timeline.

The failure lives one layer down, in the artifact itself, beyond what discipline can reach.

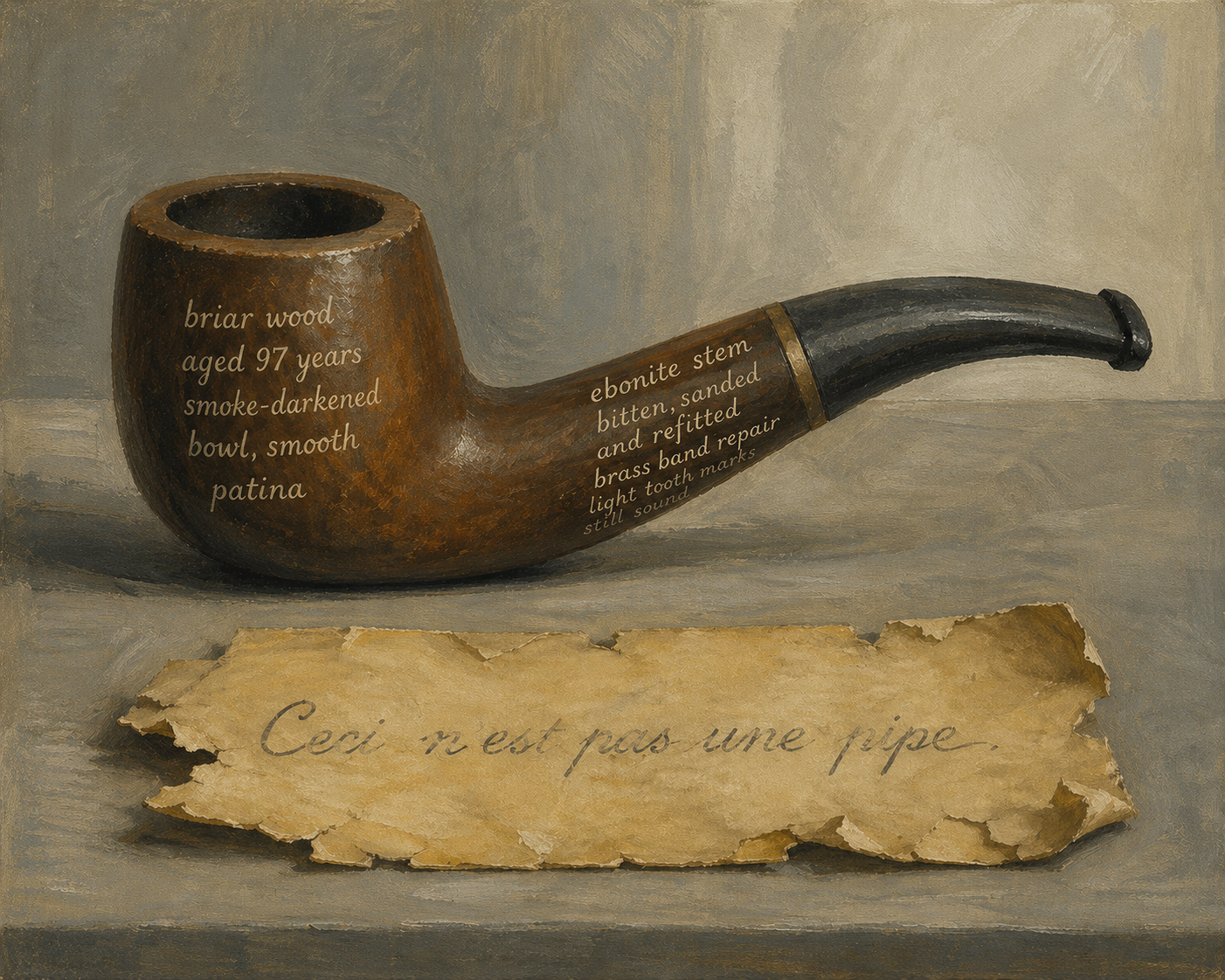

In a previous post, I argued that data is an artifact: something written by someone, somewhere, under conditions that made sense at the time. Strip the conditions away and you are left guessing. The same logic applies to documentation. A wiki page is an artifact. So is an OpenAPI spec. So is an ADR. Each one is a snapshot taken under conditions that have since changed, and under assumptions about consumers that were never complete to begin with. A page can describe the system, but it can't be the system. That gap is structural.

Auto-generation is solving the wrong problem

The obvious fix is to take humans out of the loop. Documentation written by people is always delayed by nature. Generate it from code near real-time, type-check it, run it in CI. OpenAPI specs, JSDoc, Mintlify, Stoplight. Pages of tooling exist for exactly this. The artifact stays current with the source because the source should be the source of truth (see "Code as Documentation" from Martin Fowler).

But the reality keeps moving anyway.

A team ships a search endpoint with a clean OpenAPI spec: query in, list of results out. The spec doesn't say what happens when there are zero results. The implementation returns an empty list. The frontend starts iterating over results unconditionally. Six months later, a backend optimization omits the results field entirely when there are no matches. The spec doesn't change. The frontend starts throwing on undefined.length in production. Nobody specified the empty-array shape because nobody thought it was a decision. The spec, freshly regenerated last night, still describes a contract that hasn't been broken.

Hyrum's Law names the dynamic:

With a sufficient number of users of an API, it does not matter what you promise in the contract: all observable behaviors of your system will be depended on by somebody.

Hyrum's Law was written about API stability, but it diagnoses a broader gap. A spec records the behavior an author thought worth writing. The system has more behavior than that, and consumers couple to all of it. No amount of generation closes that gap, because the gap isn't a freshness problem.

The spec captures what the author chose to specify. Consumers depend on behaviors the spec was never going to enumerate. Error envelopes, response timings, sort orders, behavioral assumptions never written into the spec. Schema registries and contract tests help. They can't close the gap, because what consumers couple to is always larger than what anyone thought to write down.

The code stays. What shifts is the meaning attached to it, and the boundary that consumers actually depend on. The same field name, the same endpoint, the same signature points at different things at different times. Auto-generation closes the freshness gap and leaves the meaning gap untouched.

Capturing the why

Maybe the deeper issue is that documentation captures the what but says nothing about the why. A wiki page tells you the current state of a system. It does not tell you why the system is built the way it is, what the team was solving for, what tradeoffs they accepted.

Architecture Decision Records (ADR - proposed by Michael Nygard in 2011) exist precisely to fix this. Capture the decision, its context, and what would change your mind. The original design also handles change correctly: an accepted ADR is never edited; it is superseded by a later ADR that links back to it. The chain forms a log of which decision governed which period.

So if we capture the state in wikis and the decisions (state transitions) in ADRs, are we covered?

In practice, the format strains. Pureur and Bittner describe how ADRs degrade into "Any Decision Records" when teams record every choice as architectural. Their fix is taxonomic: separate kinds of records for separate kinds of decisions, so the architectural ones stay distinct.

Per Møller Zanchetta goes further. He argues that ADRs fail when teams treat them as documentation rather than infrastructure. "Documentation captures what already happened; infrastructure shapes what happens next." His fix is to embed ADRs into version control, PR reviews, and sprint rituals, treating them as the medium in which decisions get made rather than artifacts to be referenced later.

Both critiques are right about the symptoms. They miss the deeper force: architecture accumulates, with or without your records.

ADRs document the conscious decisions: the moments when a team paused, weighed alternatives, and chose. The cost of that ceremony is real, and teams reserve it for decisions that feel architectural at the time.

A team adds a "remember me" checkbox to the login form. It bypasses the standard refresh-token flow because the PM wants thirty-day sessions and the standard flow caps at 24 hours. No ADR; the change looks like a UX tweak. Eighteen months later, three other features have been built on the 24-hour assumption. A fraud-detection rule keying off re-auth frequency starts flagging the long-session cohort as suspicious. Nobody connects the dots until a support engineer spots the same user IDs in three unrelated tickets. The ADR chain still describes an authentication system that no longer exists. The change was below the ADR threshold. Its accumulated effect was architectural.

Someone will say: that should have been an ADR. But the threshold is exactly the thing that makes ADRs work. Lower it, and you get the "Any Decision Records" sprawl Pureur and Bittner described. Hold it, and the architecture that emerges below it is invisible by construction. The format is doing exactly what it was designed to do, and the architecture accumulates anyway.

AI worsens the gap

Klarna's CEO, Sebastian Siemiatkowski, named this problem recently. In a recent 20VC podcast, he said something most teams suffer but won't say out loud:

Customer service agents (...) need as much context as possible. But where is that context? It's in the source code of your software (...) We can have documentation of that, but at the truth, it's somewhere deep in our source code (...) and the documentation may be inaccurate. So you realize sooner or later a customer services agent needs to read the source code to be able to answer a question.

His conclusion was operational. A customer service agent, human or AI, eventually has to read the source code to answer accurately. Klarna couldn't buy a solution off-the-shelf and built one. A multi-billion-dollar fintech with every reason to make documentation work, publicly stating that the truth lives in the source code. If Klarna's resources couldn't close the gap, most companies aren't going to.

For humans, stale documentation has always been expensive but bounded. An engineer wastes an afternoon, learns to distrust the internal docs, and asks a senior engineer. Senior engineers become the knowledge bottleneck and teams build tribal knowledge to fill the gap. Productivity takes a hit, but the company doesn't fall over.

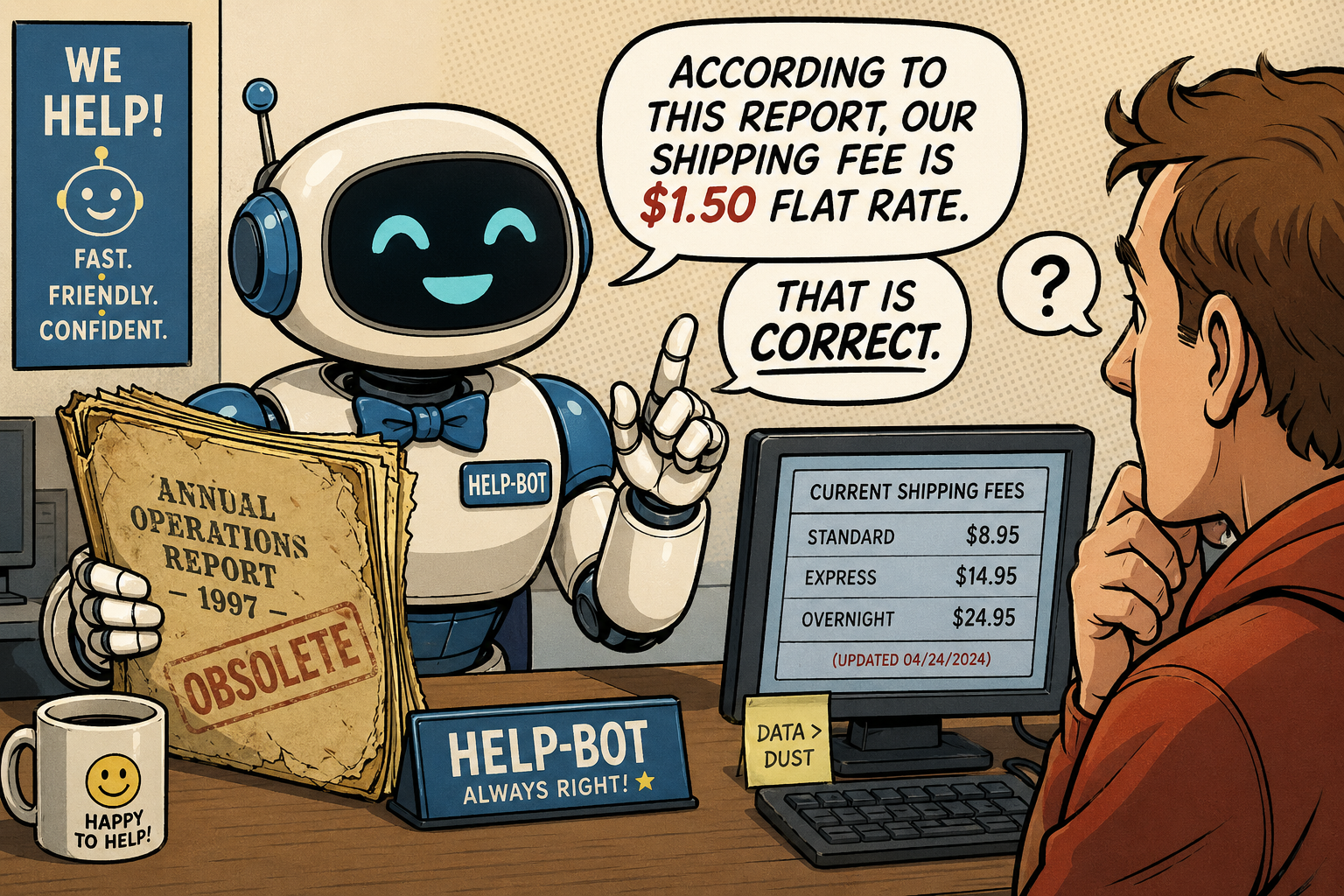

For agents, the failure mode shifts. Agents read at machine speed and have no senior engineer to ask. Even when they hedge, they can only verify against the spec, and the behaviors that bite live outside it. A stale wiki page that cost a human a slow afternoon costs an agent a wrong decision in production. Same failure mode at a different blast radius.

The asymmetry is what becomes load-bearing. Humans tolerated stale artifacts because they interpret and double-check. Agents don't. They commit to whatever's in front of them at machine speed.

The system has the context

Documentation and data share the same failure mode. They are artifacts written once, asked to track a system that keeps moving, but they never really could. Now agents are reading those artifacts at machine speed and acting on them.

The shift is in where the context lives. The system itself has the context. Documentation was always a parallel description trying to track it from the outside.

The reader has changed. Where a human consulted a page and reasoned around its gaps, an agent queries the system directly.

A system that carries its own context exposes what consumers actually couple to. Live schemas you can introspect, runtime endpoints that report which behaviors are currently in force, MCP servers that let an agent ask the running system what it does rather than what someone once wrote down. The empty-array case from earlier is no longer a question for the spec; the agent queries the service and sees the current shape. The remember-me change leaves a trace in the same surface the next agent will read.

The question changes fundamentally. Instead of asking "what does the documentation say about this?", the consumer asks "what does the system do right now?". The system becomes queryable not just for its current state, but for the behavior it's running, the assumptions it carries, and who depends on them.

In practice, that means things like: a team asks the payments service what schema it returns right now and gets the answer directly, without having to trust the spec. An agent, before acting on an endpoint, finds out which consumers depend on which variation of the contract. A team about to deprecate a field sees who's actually using it in production, in real time.

This does not retire the wiki. Onboarding, narrative, conceptual explanations should still be written by people. The load-bearing layer underneath shifts. The artifact stops being asked to track what the system does, because the system can answer for itself.

This is one of the insights that motivated me to start Dipolo AI. If you've watched documentation lose to reality more times than you'd like to admit, I'd love to talk. Send me a message: raphael@dipolo.ai.